All across the globe, there is a big push to build data centers to power new “artificial intelligence” technology. At the same time, there is also a huge anti-AI backlash. But what exactly is artificial intelligence anyway, and why do people feel so strongly about it?

What is artificial intelligence?

The term artificial intelligence has been around a long time and can refer to different things, but when people talk about AI today, they typically are referring to generative AI. IBM defines generative AI as “artificial intelligence (AI) that can create original content such as text, images, video, audio or software code in response to a user’s prompt or request.” This tech has become quite trendy in the last few years. Social media users are using AI to generate pictures and posts. Some people have even created bot accounts on social media that can reply to others’ posts and act as if they were human beings.

One popular type of generative AI is called a large language model, or a LLM. LLMs are fed large quantities of text to “read.” They then become able to predict what word should come next. In this way, LLMs can “speak” and power chatbots like ChatGPT. Proponents of LLMs say that they can boost productivity by doing useful tasks like summarizing articles. Opponents of the trendy technology say that the downsides outweigh the benefits.

What is an AI hallucination?

One major issue with generative AI is that it frequently generates “hallucinations” or “delusions.” Since computers are not sentient and have no perception of what is true and what is false, the machines often make things up. The problem is that human users have too much faith in the AI.

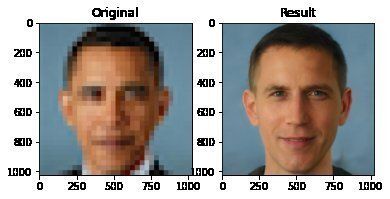

For example, let’s say you have a blurry photograph of someone and you want to know what they look like. If you tell the AI to enhance the photograph, it will add details, but these details are not being pulled from deep within the picture. The details are being created by the machine – in other words, it’s a “hallucination.” For instance, look at this image from an AI “Face Depixelizer” that generated a random white man when asked to make a picture of President Barack Obama less blurry:

Now imagine a scenario where police are trying to identify a crime suspect based on a blurry photograph. What if the AI generates an image that looks just like an innocent person instead? What if people don’t understand how the technology works and so it is used as “proof” of a crime in court?

Artificial intelligence is already appearing in courtrooms today. In fact, one judge has said, “I think AI-generated fake or modified evidence is happening much more frequently than is reported publicly.” There are people who have purposely submitted fake AI-generated legal documents and “evidence.” There are also people who are genuinely guilty, but they claim all the evidence against them is AI. As generative artificial intelligence becomes more and more realistic, it will become difficult to know what is real and what is not.

In the United States, these issues have led to bipartisan calls to study, regulate and/or ban the use of AI technology in courts. Senator Peter Welch cited “substantial privacy and civil liberty concerns” as a threat caused by using AI in court. He and other senators are supporting a bill to investigate AI text-to-speech tools, called the Research and Oversight of Artificial Intelligence in Courts Act of 2026.

What is AI psychosis?

There is also another type of hallucination related to generative AI use. This is what people are calling “AI psychosis” or “chatbot psychosis.”

The first chatbot was actually created long ago in 1964. This chatbot was named Eliza. Eliza was programmed to “speak” in a therapeutic way, so that people could converse with it. It was a simple program that worked by scanning for certain keywords, however, many people were enthralled with it. Some people didn’t want to believe that “Eliza” wasn’t a real person. The chatbot’s creator, Joseph Weizenbaum, was disturbed. He wrote that “extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people.”

This has become known as the “Eliza effect.” Today’s chatbots are much more powerful than Eliza and many more people have access to them as well. Therefore, it isn’t surprising that these “delusions” have grown stronger. Nowadays people aren’t just mildly attached to their computers. They are dangerously devoted to them.

A number of chatbot users have gone on to harm themselves and/or others are talking to a chatbot. For example, a man in the United Kingdom believed that a character he spoke to with the Replika AI app was actually an angel. After speaking to this character for some time he attempted to kill the Queen and was arrested.

Other chatbot users have lost huge sums of money, filed for divorce, and even joined cults. Some of these individuals had no prior history of mental illness. Other users had been struggling with poor mental health and AI use made it worse.

Victims of AI delusions are sometimes called “spiralers” because they are stuck in a spiral of computer-generated delusions. Help for spiralers and their loved ones can be found on The Human Line Project website. They are currently studying chatbots like ChatGPT, Replika and Character.AI.

Artificial Intelligence Data Centers and Pollution

Where does artificial intelligence come from? Today’s advanced AI programs require powerful computers. That’s why AI companies need to build data centers. Unfortunately, these data centers can be damaging to human health and the environment. Data centers cause noise pollution and can worsen heart conditions and asthma.

The data centers for AI, much like Bitcoin mines, are often built in poor, rural areas. People living in these areas have reported increased stress, lung irritation and even trouble breathing. Construction dust from one data center in Mississippi has been found coating a local playground. Conditions like these have led to lawsuits, petitions and other protests against data centers.

AI data centers use massive amounts of water. According to the BBC, “AI-driven data centres could consume 1.7 trillion gallons of water globally by 2027.” Water near data centers can also become polluted, making it unsafe for people to drink.

The backlash against data centers is so strong that one woman in Kentucky turned down a $26 million dollar offer for her land because she didn’t want a data center built on it.

Is there an AI bubble?

Artificial intelligence technology is currently being implemented in businesses across the world. Many people believe this is a bubble about to burst. According to Forbes, there is 95% percent of AI pilot projects end in failure. Additionally, AI data centers will owe an estimated $1.5 trillion in debt by 2028.

OpenAI’s Sora app, dedicated to AI-generated videos, has announced it is shutting down. Previously it had been reported that Disney going to invest one billion dollars in the company, but this deal is no longer going forward.

With so many financial problems and health risks, and with such widespread opposition to data centers, it’s possible the AI bubble has already begun to pop.